If you add this to your configuration file, You can use MySQL's replication mechanism to create a read-only replica. Specify this host in the project configuration file. So, if you need to increase the capacity of your server the first step is to move the MySQL server to a separate host (preferably a fast computer with lots of memory). Of these tasks, the MySQL server does the most work (typically as much as all the others combined). When you initially create a BOINC project using make_project, everything runs on a single host: MySQL database server, web server, scheduling server, daemons, tasks, and file upload handler. For a high-traffic project, use a machine with 8 GB of RAM or more, and 64-bit processors. Do whatever you can to make it highly reliable (UPS power supply, RAID disk configuration, hot-swappable spares, temperature-controlled machine room, etc.). Use a host with good CPU capacity (dual Xeon or Opteron), at least 2 GB of RAM, and at least 40 GB of free disk space.

Edit the following line in your nf (usually in /etc/httpd/conf.d/nf):.Copy these to your project's cgi-bin directory, with the names 'cgi' and 'file_upload_handler'.compile the Fast CGI version of the scheduler ( fcgi) and file upload handler ( fcgi_file_upload_handler).Build (or yum or apt-get) an Apache server with mod_fcgid (or mod_fastcgi - an older compatible version of mod_fcgid).This eliminates the overhead of creating processes and connecting to the database. Use Fast CGIįast CGI uses long-lived processes that handle lots of requests, instead of creating a new process If you experience these problems, try the following (in order). database queries take minutes or hours to complete.

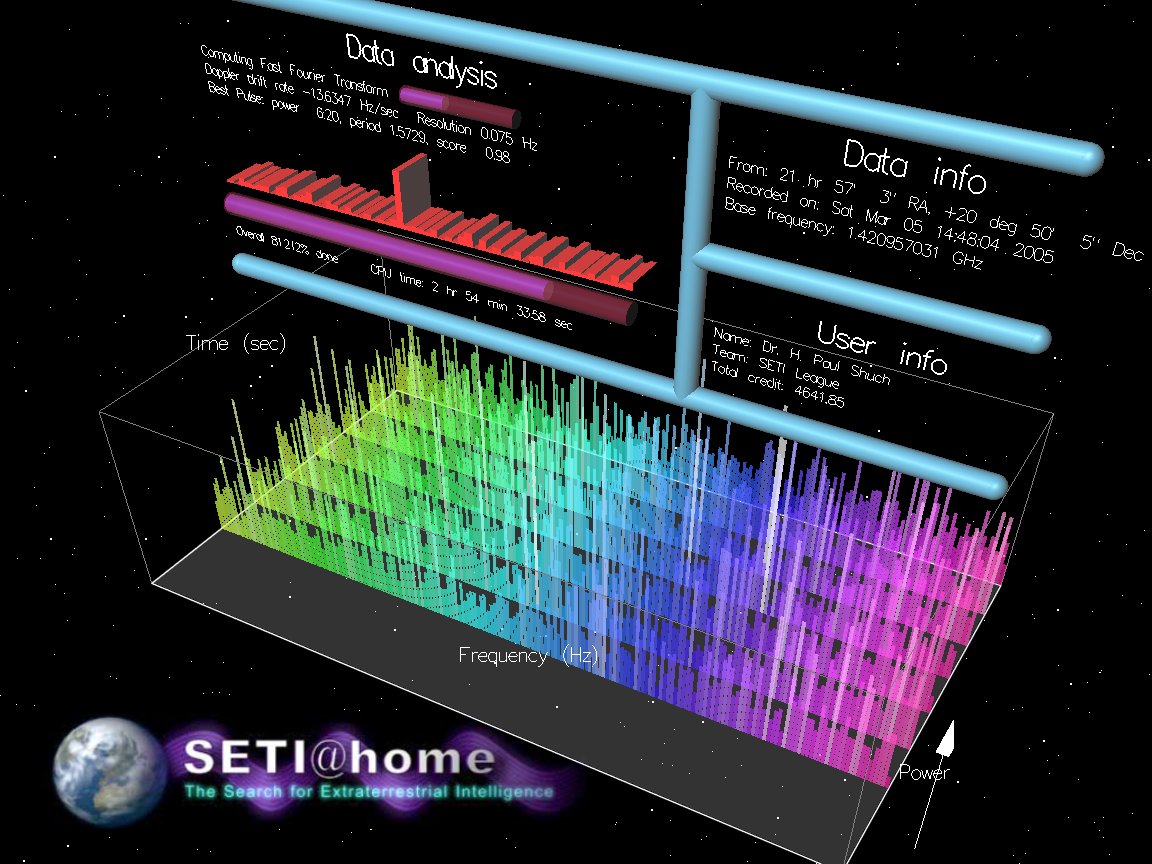

However, the capacity of this computer may eventually be exceeded. We're watching everything closely.The BOINC server software is designed so that a project with tens of thousands of volunteers can run on a single server computer. TLDR: our crunchers consume jobs at a rate at least one order of magnitude higher than our other computing venues, so it stresses all the infrastructure. We are tiptoeing back into the creation of WUs. That server is critical for all nanoHUB jobs, including courses at several universities, so the DB upgrade had to be thoroughly tested before rolling it out. The database of in-progress jobs (via BOINC and other venues) was consuming all the memory on that machine, leading to swapping. The WU's are created from nanoHUB input files by another server, and we had to do significant upgrades to that server. We had to upgrade the BOINC server in January to support the volume of WUs our crunchers consume. The BOINC server for this project is connected to several other key parts of the nanoHUB infrastructure. We had to temporarily halt WU production while we fix the other machine. We're having trouble with a nanoHUB server that sends jobs to the BOINC server for WU production. We saved all the results and set up a new dedicated machine to handle that part of the work flow. A nanoHUB machine that handled results returned from the BONIC server froze, stranding 100k successfully completed results, along with some live nanoHUB sessions. This move potentially will cause intermittent interruption of service for the next several days. The server is moving to a new physical location.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed